Abstract

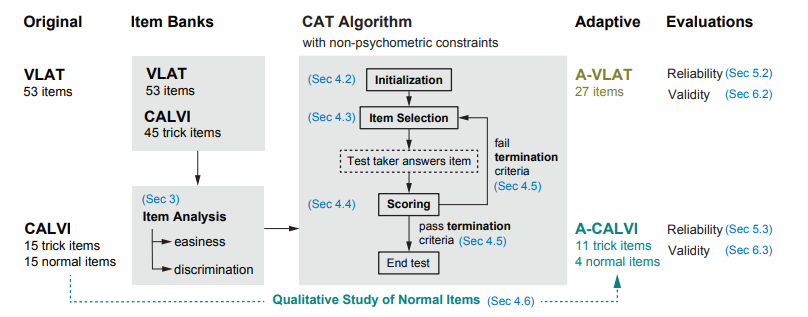

Visualization literacy is an essential skill for accurately interpreting data to inform critical decisions. Consequently, it is vital to understand the evolution of this ability and devise targeted interventions to enhance it, requiring concise and repeatable assessments of visualization literacy for individuals. However, current assessments, such as the Visualization Literacy Assessment Test (VLAT), are time-consuming due to their fixed, lengthy format. To address this limitation, we develop two streamlined computerized adaptive tests (CATs) for visualization literacy, A-VLAT and A-CALVI, which measure the same set of skills as their original versions in half the number of questions. Specifically, we (1) employ item response theory (IRT) and non-psychometric constraints to construct adaptive versions of the assessments, (2) finalize the configurations of adaptation through simulation, (3) refine the composition of test items of A-CALVI via a qualitative study, and (4) demonstrate the test-retest reliability (ICC: 0.98 and 0.98) and convergent validity (correlation: 0.81 and 0.66) of both CATs via four online studies. We discuss practical recommendations for using our CATs and opportunities for further customization to leverage the full potential of adaptive assessments. All supplemental materials are available at https://osf.io/a6258/.

BibTeX

@article{cui2023adaptive,

title={Adaptive Assessment of Visualization Literacy},

author={Cui, Yuan and Ge, Lily W and Ding, Yiren and Yang, Fumeng and Harrison, Lane and Kay, Matthew},

journal={IEEE Visualization and Visual Analytics (VIS)},

year={2023}

}